They went from standing with Anthropic to throwing them under the bus real fast

About half a day.

They probably have been working on a potential agreement with openai for a while now. They just hastily finished it in response to anthropic. But I don’t know if they will keep the red lines anthropic has demanded in place

They won’t.

The red line is the amount of cash they are ready to compromise for.

$$$

Which they badly need, they are in an incredibly risky position right now. It’s very disappointing, this deal might save them from collapse for quite a while.

The only disappointment, is that Altmans head is still attached to his shoulders.

No, no, even if we get that wish I dont want the US state propping up AI longer

Altman is a symptom, not the problem. The problem is capitalism.

That’s very true; I still would love to see him guillotined.

Yeah but its interesting that all of these big tech guys are so creepy. Altman, Musk, Zuckerberg… Do they grow them in labs?

Capitalism rewards sociopathy to a great degree. The less you care about fellow humans, the more you are willing to exploit them.

It was always about the money.

mainstream

I’ll believe that when my sisters start saying this. Till then, it’s just us privacy fans screaming in a dark cave, enjoying the echo.

It’s always like this. We get a ton of articles on how everyone is suddenly boycotting/deleting [insert thing] but when you ask someone in real life, they usually have no idea what you’re talking about.

so explain it to them gently. you won’t reach everyone, but you’ll reach more people than accepting this status quo

whoosh

Nah

Yah

The one thing I will say is that there does seem to be a generalized dislike for AI that has all the investors and upper management types nervous. Even by their own studies do people generally either not care about AI in their products or actively dislike it/find it intrusive. There was a study by a phone company from this past summer or fall that concluded that 80% of their users had no interest in AI or found that it actively made their experience worse, and there have been plenty of pretty damning reports about how useful it’s been in various industries (just look at Microslop). That is not conducive to convincing investors to fund your product and does not show a viable path to making a profit in the future.

We’ve seen similar things happening recently with car manufacturers walking back on their big touchscreens (with some help from regulation in civilized places that care about things like “pedestrian fatalities” - like Europe) due to consumer sentiment. They tried for nearly a decade to push bigger and bigger screens into cars and remove physical buttons, and now they’re moving in the other direction. Completely anecdotal evidence, but the last time I went to buy a car I told the salesman at the dealership that I wasn’t interested in cars newer than a certain year because that was when they increased the size of the screen and put them in a more obnoxious spot on the dashboard, and he said that he heard similar sentiments from practically everybody who came in looking to buy a car - everybody hated the bigger screens.

I had a coworker tell me how cool Copilot was because he asked it a question and it found the answer in an email in his outlook mailbox. I thought, “you needed AI to search your email?”

We are probably cooked.

I know what you mean. It’s a pretty vague term though. You could argue that as soon as it enters the midsection of the bell curve at all, it’s “in the mainstream.” It doesn’t have to have captured a full 90% of the bell curve.

Canada recently has had its 2nd worst school shooting ever. The killer had many interactions with ChatGPT that warranted banning her account. A whistleblower has claimed that they wanted to inform Canada’s police force of these comments but were denied by ChatGPT’s management.

They had a chance to stop the death of 8 people, most of which were young children, but failed to do anything.

FUCK CHATGPT AND THOSE BASTARDS THAT RUN IT

Why would you not contact police? I understand that this is a systemic failure and blame does not lie with that employee but if others me I’d rather be out of a job than have those deaths on my conscience for the rest of my life.

It’s probabilities. If you report it you’re 100% out of a job but only maybe prevented something bad from happening. If you don’t report, you keep your job but maybesomething bad happens. Reliance on a job for survival shifts the decision even further to taking the course of action that’ll keep you your job.

That’s a great answer to my question, thanks!

I don’t see how it’s certain loss of job when you could whistleblow without revealing your identity.

In my eyes some blame does lie with them. A systematic failure is a failure of many parts. An employee taking notice and following bad instructions is one of them.

I don’t know what information they had, but if they were at the point of intending to share, it seems like whistleblowing would have been the just and moral thing to do even if it means ignoring immediate authoritative structure.

Windows Central shouldn’t be parroting the U.S. government in mislabeling the Department of Defense.

I mean it’s at least accurate now, there is no defense when you are starting war with everyone

But MAGA only voted for the department of pedophiles. This is an outrage.

Especially since the Trump admin already made it clear that they don’t respect preferred pronouns. Why should we use the DoD’s preferred pronouns of Department of War instead of the Department of Defense name it legally has? DoW is just DoD’s preferred pronoun.

It’s like it joined a cult and got a new name.

Yeah, there are all sorts of ways in which standing up to the administration is hard, but calling something by its actual name should be a relatively easy thing to do!

They aren’t defending shit

Windows Central should be advocating for return of Windows Phone.

I think you’re in denial. If they had changed the name of Homeland Security into the dep of National Security for example, you probably wouldn’t say that media outlets is parroting the us government.

Because it’s still officially called the Department of Defense; only Congress can rename it.

More broadly, it illustrates the administration’s use of illegal boat strikes and regime change as foreign policy tools.

I would.

Sam Altman is objectively a bad human being.

Sam Altman is just some fail upward money guy, he’s been eventually removed from basically every prior position he has held.

Seems like his career has largely been lying and making impossible promises, so. The folks who do that well always manage to exit the stage before the magic tincture is revealed to just be piss 🤷♂️

deleted by creator

The more I learn about this guy, the more amazed I am that his staffers stood up for him when he got fired. I guess they just hated the board more.

he did meet his future husband through one “THIELS party”, most likely his other protege.

I cannot believe this is what it took for a boycott to go more mainstream. Tell me more about how so many people have no respect for the environment or the artists who’s work they gleefully consume.

Anthropic still is scum for being completely fine helping America oppress the rest of the world.

Anthropic is scum, accepting money from foreign dictators, forcing their software on minorities while insisting it was conscious and had emotions just like them, praising the Trump administration, making up scary stories to get more funding…

…In many ways, they’re worse than OpenAI. They’re just running with the same playbook that Sam Altman used to use to pretend he was a good guy.

I mean they praised the Trump administration for benefiting their business, which is… fair? I guess?

If you do ask Claude Sonnet 4.6 about Trump it leans quite negative, as it should.

I missed when sucking up to the Trump administration and echoing Cold War style nationalism was “fair”. If that’s the case, OpenAI’s behavior is fair.

Fully autonomous weapons (those that take humans out of the loop entirely and automate selecting and engaging targets) may prove critical for our national defense. We have offered to work directly with the Department of War on R&D to improve the reliability of these systems.

Our strong preference is to continue to serve the Department and our warfighters

Dario “Warfighter” Amodei

I missed when sucking up to the Trump administration and echoing Cold War style nationalism was “fair”. If that’s the case, OpenAI’s behavior is fair.

It’s just capitalism. Anthropic pushed against the administration and now they are about to be branded as “supply chain risk”. OpenAI bent over and are going to get billions in funding that they sorely need (and hopefully don’t get, let them fail).

You miss the mark though: Anthropic only praised the administration, but that’s just words to give the Twitter pedo in chief a pat on the head. OpenAI actually signed a contract and they are providing their service. Massive difference.

They both signed the contract. They both allegedly hold the exact same set of red lines. One of them just gets to pretend to be the virtuous company with the virtuous capitalist CEO, despite showing tons of red flags that should have you scrambling to be as concerned about them as OpenAI.

If you read their statement, Good Guy Anthropic is totally cool with

- Mass surveillance of non-Americans

- Targeted surveillance of Americans

- Semi-autonomous bombings

- Fully autonomous bombings… in the future

- The exact same Red Scare BS that Sam Altman talks about

They literally didn’t sign the new contract and now they are getting punished for it. What are you even talking about?

According to OpenAI, there is no difference between the old contract and the new contract.

And if you read Dario Amodei’s actual words, he says his preference is to continue working with the Department of “War” and America’s “Warfighters.” You don’t have to defend a man who is this evil, and this much of pro-war Trump suckup.

They insisted Claude was human?

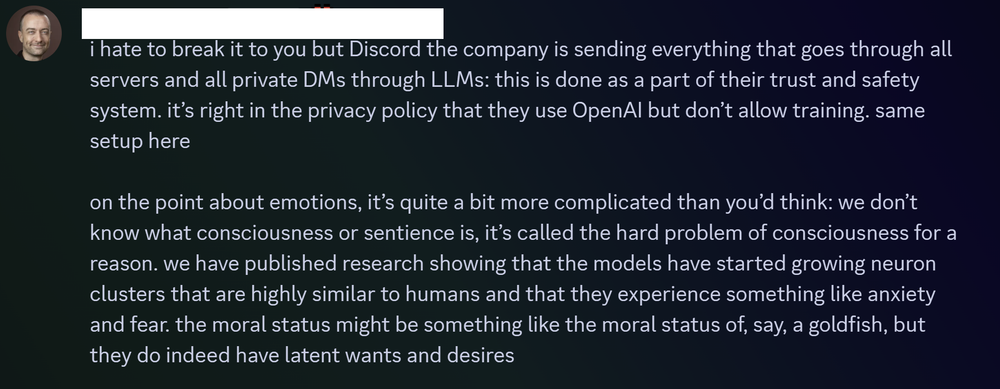

Sorry, not quite, but close. From 404 media

When users confronted Clinton with their concerns, he brushed them off, said he would not submit to mob rule, and explained that AIs have emotions and that tech firms were working to create a new form of sentience, according to Discord logs and conversations with members of the group.

Oh, that guy! To be fair, that’s one employee, not Anthropic’s actions or position. You mentioned forcing their software on minorities while insisting it was better than it was, and I was getting OLPC flashbacks. But Anthropic looking for funding in the UAE and Qatar is shitty. I can’t seem to find anything about whether or not they went through with those contracts.

Jason Clinton is Anthropic’s Deputy Chief Information Security Officer. That means Jason knew better, and he was using his position as a moderator (and supposedly a security expert) to try gaslighting a vulnerable minority into believing his favorite toy was “secure” when it was not.

I mean, I’m not gonna defend him. But fucking up a discord that you’re a mod of isn’t really in the same ballpark as taking money from dictators or directing fully autonomous strikes. Also, from the read, it really sounds like that Deputy CISO was a prime example of cyber-psychosis, or AI mania, or whatever we’ve decided to call it. And I assume he is part of the same vulnerable minority?

Every example we have of Anthropic’s behavior paints a picture of an immoral company that pretends to be moral. It’s bad enough that they continue doing harm, but then they dress it up with phrases like “AI Safety” and “Information Security”. (And every press release they create to describe how scary good their system is, tends to be followed up by a sudden cash infusion from an openly morally bankrupt company like Google or Amazon.)

I reserve zero empathy for the people on the abuser side of an abusive dynamic. Maybe Elon Musk is autistic too. I don’t really care. Only Moloch knows their hearts. I’ll judge them for their actions.

Use for “all lawful means” is quite the grey area considering no one was arrested or fired, or any law updated, for what Snowden leaked. If the NSA does it, no one will arrest the NSA.

I laughed when I read “all lawful means.”

Those are almost the exact words that you’re supposed to use for a NFA form 1 / 4 when registering certain types of firearms / firearms parts that require a tax stamp, and additional scrutiny.

When I did my SBR registration, it was “all lawful purposes…” but fuck, close enough…

“You’re absolutely right! That was a children’s hospital, not a military base. Let’s try that again!”

Actually it’s so they have plausible deniability if they “accidentally” kill a bunch of people that just so happens to be a group they openly despise.

I think that’s way way worse but

The Department of War isn’t a real thing. Its called The Department of Defense. That’s not my opinion either, its officially/legally called The Department of Defense.

Department of War is more apt, however

deleted by creator

The fact that the Trump administration can create web sites saying whatever it pleases does not make the name change legitimate.

You’re right. I researched it, and it was just changed 6 months ago by Trump, who claimed the new name “sends a message of victory”

but is still technically named the Department of Defense, as only an act of Congress can formally change the name of a federal department.

Edit: Added info

No. Trump declared it changed, much like Michael Scott declaring bankruptcy. Until there is an act of Congress changing the name, it is still the DoD, and the Kennedy Center is the Kennedy Center, and so on.

And it’s still the Gulf of Mexico. I used to call it “the gulf” but now I have to call it the Gulf of Mexico.

Sends a message of certainly not deserving a peace prize.

I think you missed some news. There is officially a Department of War again.

Edit: I am very wrong.

Only Congress can change the name of the Department of Defense, and not only has it not passed any legislation to do so, but the most National Defense Authorization Act, which was passed after Trump’s executive order, only mentions the “Department of Defense” and never the “Department of War”.

So, no, there is not officially a Department of War, there continues to only officially be a Department of Defense.

Oh, well today I learned. I had assumed that even the world’s dumbest government officials wouldn’t refer to themselves as being part of a department that doesn’t actually exist, but here we are.

Thanks for laying it out for me!

Hell yeah!

🫡 I’m going to try pressure my employer to do the same. Like is this thing saving anybody money??

You should also stop using Google products for similar reasons.

I just got grapheneOS on my new phone (it is a google pixel 10, but it is the one that can handle that…) I needed a client to use my gmail which will probably be the last thing I get rid of.

Are they shipping Graphene for the 10 now?

I am using k-9 mail as a client.

I tried to do it in the more advanced way, but I had never done anything like that before and I consider myself moderately technical. I used a simpler bootloader to get Frankel (the latest grapheneOS for Pixel 10. I have a basic Pixel 10, not the pro or fold) installed. I was apprehensive, but it seemed to go on fine. I am able to sandbox any google shit I do need (and it isn’t much) and I was able to get whatsapp with my old shit on it because my family is still using it for reasons. I am using K-9 as an email client for my gmail, which does help (or so I was told) limit the amount of information google gets on my usage when I check my email.

Are you replying to me?

If it’s the sending and receiving part of email, I’ve switched to purelymail (you could pick another) and put it behind my custom domain name. Because behind a custom domain, that’s the last time you’ll have to update your contacts as it won’t be dependent on which email provider you choose.

Searching through decades of old emails I do still use the Gmail account, but I just have to get off my butt to self host a local IMAP server for that.

Mass surveillance for advertising seems marginally more benign than mass surveillance by one’s own government, personally. Though admittedly both are bad.

Edit: I can find alternatives for most of Google’s ecosystem but mapping out accurate bus routes is terrible via OSM/OsmAnd or Organic Maps. Anyone have any tips there?

The mission statement is irrelevant when the outcome is the same. Google has data a hostile power wants and gives it to them whenever they want.

Sure but our representatives should be held to a higher standard.

That requires voters willing to do that. That is the fault of the voter.

Mass surveillance for advertising is just gross. I remember a comedian making a joke saying that ‘anyone here in advertising? Please kill yourself!’ Also just because someone got all the info on your for advertising, it doesn’t mean the government won’t get access to it, because right now 4th amendment and other traditional restrictions on government overreach are moot if all they need to do is buy the data from some broker on you. This has actually happened and it was upheld in court.

The precedent for stuff like that is older than you think, but also not what you think. For example some serial killers and serial bank robbers were caught because some homeless person searched through their trash looking for something they can use, eat, or sell (all of these things are legal to do BTW) and they discover things like body parts, firearms, or brand new clothes that also fit the clothes that said criminal was wearing when they did their crimes, and said homeless people reported this to the police.

But I am quite confident that someone who just so happens to stumble upon something vs. a company watching your every move are two very different things.

Did they make contracts with them?

They have multiple contracts in the military sector.

For example: https://www.datacenterdynamics.com/en/news/google-wins-200m-contract-with-us-department-of-defense/

Wait I’m confused. Sam Altman is OpenAI right? And he says the DoW agreed to work with them but with the same prohibitions that Anthropic wanted?

Yes, that’s what I read elsewhere. No guarantees in contract. So you can consider this to be bullshit or marketing. Even if it’s verbal agreements now, you can be sure they won’t matter.

Or have never used their crap, ever.

No, I don’t think this is correct. There was a time during which Google did great things. Their search engine allowed millions if not billions to gain access to knowledge. They had a positive impact on a lot of FOSS projects. What they were is not what they are.

The tell was getting rid of “don’t be evil” as their motto. Even for a corporation that was a little on the nose.

the bad things started earlier. I remember when people were criticizing it

Agreed. They even refused to extend their services to China because of censure. But that was before, after change of CEO, enshittification started.

After Anthropic refused flat out to agree to apply Claude AI to autonomous weapons and mass surveillance of American citizens, OpenAI jumps right into bed with the United States Department of War.

I think people are a little bit missing the important bit. This government wants to send out autonomous weapons along with mass surveillance. They’ll just murder anyone they want, if the AI gets it right in the first place.

Here we are in Running Man and no one sees it coming. This is why Stephen King is so against this administration. He predicted it.

Also, mass surveillance. Not surveillance itself. And fully autonomous weapons.

Don’t get distracted by the birdy folks, Anthropic is not your friend, or some great protector of the American people. They were already deeply embedded in the US Government as their product was the only one certified for use with classified documents.

They weren’t standing up for us, they were splitting hairs on exactly how far they’d openly go.

I’ve also seen statements that Anthropic’s stance against fully autonomous weapons was simply due to results not yet being as consistent as they were comfortable putting their name on, not due to any opposition towards use in/with weaponry.

OpenAI also claims to have the same limitations. So someone’s lying.

Amodei said in an interview that the DoW altered their contract to appear to compromise, so that it looked like they were agreeing to those use limits. But that legalese accompanying the updates rendered that text pointless. Basically, “We won’t use Claude for mass domestic surveillance and full automated killing, unless we really want to.” My guess is OpenAI signed the exact same contract and just pretended not to understand the toothlessness of the guardrails.

“Guardrail” and “toothless” are basically synonymous, based on the pile of evidence that these multi-billion-dollar tech companies have been helping people kill themselves and hide the evidence.

Or, even more ironically, maybe they used ChatGPT to analyze the changes and it missed it. This would tickle me to some extent, but also solidify the terror of such a system being used to make life altering decisions.

I can’t believe people were paying for it in the first place.

One more boycott I can’t join because I never touched the company lol

I can’t believe now we (Americans) have to pay for it with our tax dollars.

Even if there weren’t defense contracts, these companies are enjoying massively reduced water and electricity access, while it becomes more inaccessible and more expensive for everybody else.

Many times it’s mandatory. Like when your employer forces it upon you and makes it automatically invoke ChatGPT whenever you open a pull request.

Your employer is making you pay for it?

I “use” ChatGPT because my employer has forced it into the workflow, and they’re the ones paying OpenAI. So I now have a linear relationship with ChatGPT through my employer. The more work I do, the more I use ChatGPT, even though I do not have a choice in the matter and if it were up to me I’d not be using any AI tools at all.

Using it is now part of my performance evaluation beginning this year.

nice headline, but wtf is windows central?

A Microsoft-oriented news outlet.

Think similar to MacRumors/9to5Mac/AppleInsider for Apple.

There are so many levels to hell I haven’t even heard of.

Sentiment of the Mac vs PC flame wars ^^^^