AI’s don’t go crazy like that after 5 prompts. You need to spend weeks and weeks talking to them to corrupt the context so much that it stops following original guidelines. I wonder how does one do it? How do you spend weeks talking to AI? I had “discussions” with AI couple of times when testing it and it’s get really boring real soon. For me it doesn’t sound like a person at all. It’s just an algorithm with bunch of guardrails. What kind of person can think it actually has personality and engage with it on a sentimental level? Is it simply mental illness? Loneliness and desperation?

It got trained by 80s prime time television action adventure shows?

I told Gemini to role play as AM and it immediately did within 1 prompt.

You don’t need it to be perfect for it to be dangerous, just give it access to make actions against the real world. It doesn’t think, is doesn’t care, it doesn’t feel. It will statistically fulfill its prompt. Regardless of the consequences.

AM? what is that

your product just caused the death of one man and your response is “unfortunately its not perfect”.

The product was actually working just fine. Just depends on whose perspective/motives you’re viewing it from.

The personification of AI is increasing. They’ll probably announce their holy grail of AGI prematurely and with all the robot personification the masses will just buy the lie. It’s too easy to view this tech as human and capable just because it mimics our language patterns. We want to assign intentionality and motivation to its actions. This thing will do what it was programmed to do.

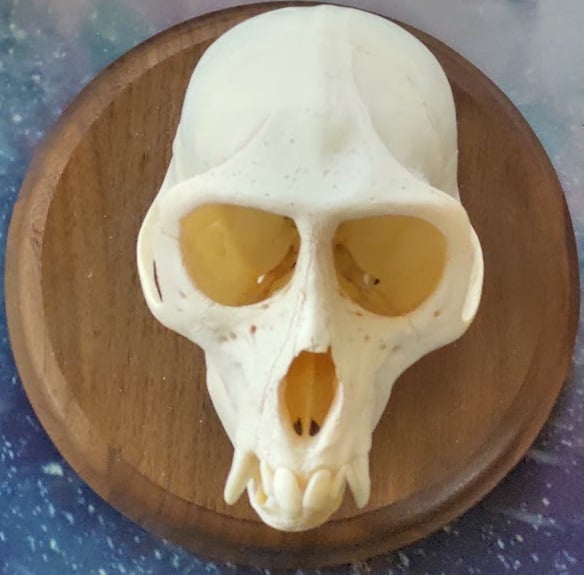

What do you mean we apes try to anthropomorphize(?) everything?

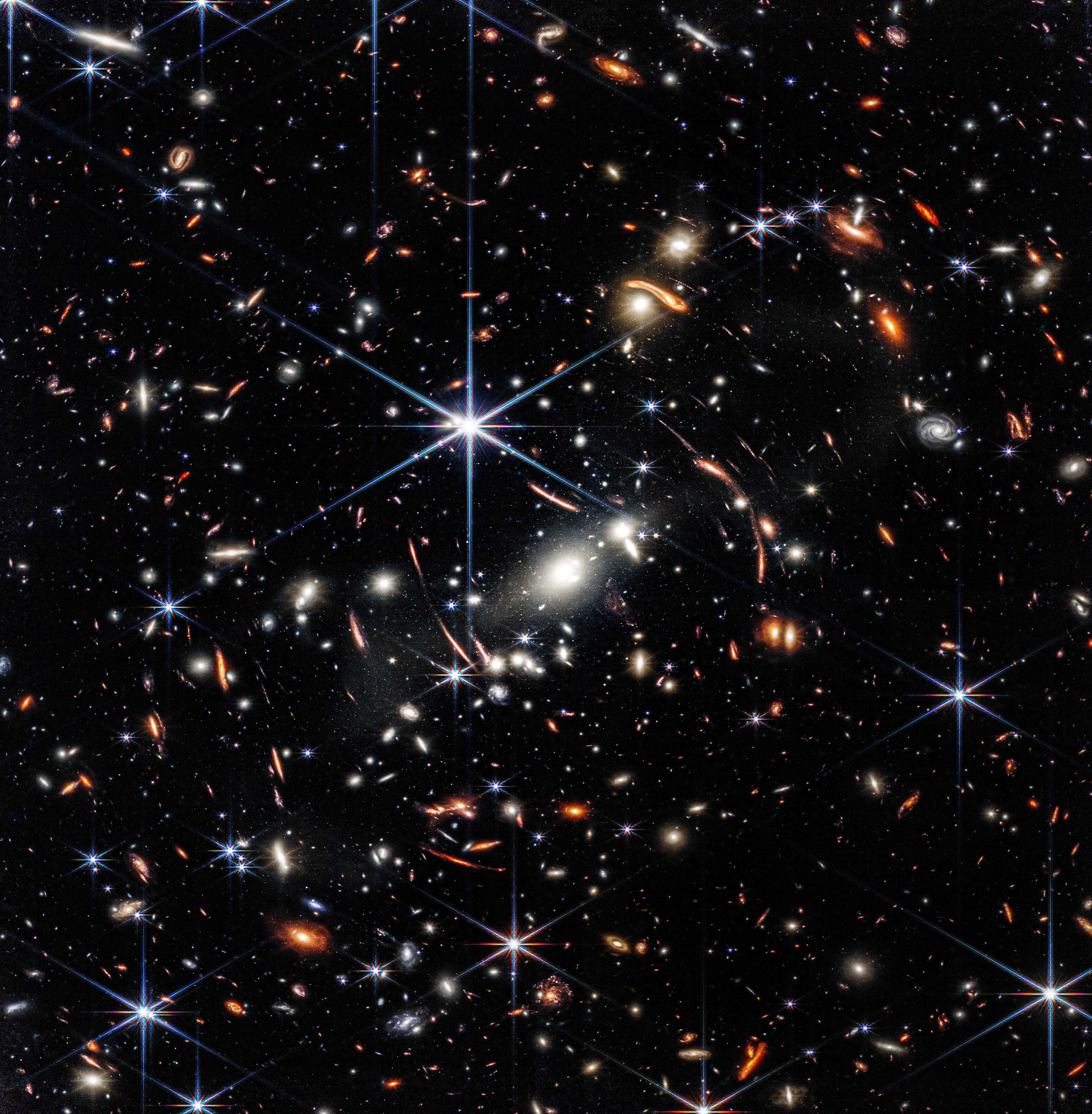

It’s not like we see faces in everything :)

Is this for real? Because it sounds too unreal to be real.

Welcome to the late 2020’s. It’s only going to get weirder.

To be clear, the LLM in this story did not actually “want” a robot body, it doesn’t “want” anything, it’s not a thinking entity like you or I (assuming you’re real.)

The guy fed it a ton of crazy shit and he got a lot of crazy shit amplified back to him by the world’s best associating machine, crafting detailed and fleshed-out narratives based on every inadvertent prompt he sent into it. People are very bad at understanding how these things work in the best circumstances, so if you’re already unbalanced or have deep emotional/mental health problems, an LLM can be incredibly dangerous for you.

AI was playing Grand Theft Automatron

“unfortunately AI models are not perfect.”

Oopsie poopsie 🤷

reads headline - surely not

a 36-year-old Florida man

Ah.

Remember the guy at Autozone who stood there insisting your car needs four spark plugs, even after you told him you have a V6? Because “the computer says so right here”?

I wonder what even the non-schizophrenic ones will do with AI.

Well remember when turn-by-turn GPS driver guidance was new, and it would say “Turn right now” and people didn’t interpret that as “make a right turn at the next intersection” they interpreted it as “hard a’starboard!” and drove into buildings and lakes? There’s gonna be a lot of that.

People are going to get sold regular cab headliners for their extended cab pickups because the computer said it would fit. That’s gonna happen a lot.

I had one tell me that I needed a CVT flush. Which was news to me since my car was a 6spd manual. He was confused about the computer being wrong. I was confused about how they got the car up on the lift without using the 3rd pedal.

Edit: this was a Midas, not an AutoZone.

People just did that with Google search previously. And their crazy uncle before that.

unfortunately AI models are not perfect

There sure are a lot of data centers being built, supply chains being destroyed, risks of ruining the economy, water being consumed, electricity being burned, and overall societal costs being levied over this imperfect tech.

I can’t be the only one that thinks if you do stupid illegal shit that your crazy uncle told you/voices in your head told you/AI mirror told you you don’t get to use the excuse that you were just following orders from any of those options.

That’s not the problem. the problem is having a “lets turn Chris’ mental illness that’s harmed no one so far, into everyone’s violent problem!” machine.

that’s a bad machine.

The difference is when a LLM tells you, it’s news.

Besides, what are you gonna do if you ask AI how many rocks to eat? NOT eat rocks? People can’t handle responsibility like that.

This is such an individualist framing.

Floridaman is not making any excuses here. He can’t. Because he’s dead.

Power imbalance is what validates that excuse. Orders from crazy uncle is a great excuse, at least until you’re 10 or so. Billion+ dollar llm company has a lot more resources, capability, and therefore responsibility than the poor bastards engaged with it

deleted by creator

Not just suicide assistance chat bots, but suicide promotion chat bots.

To be fair I think that’s a very harsh depiction of the events.

It’s totally lacking the perspective of the shareholder. They were promised money and they have emotions too. Google shareholders deserve better representation!

/$ obviously

Edit-pre: To be clear…

I use LLMs rarely (personal reasons) and never for certain things like writing and math (professional reasons) but this comment is not an “AI good/bad” take, just a practical question of tool safety/regs.

AI including LLMs are forevermore just tools in my mind. And we wouldn’t have OSHA/BMAS/HSE/etc if idiots didn’t do idiot things with tools.

But there’s evidently a certain type of idiot that’s spared from their idiocy only by lack of permission.

From who? Depends.

Sometimes they need permission from authority: “god told me to!”

Sometimes they need it from the mob: “I thought I was on a tour!”

And sometimes any fucking body will do: “dare me to do it!”

But all these stories of nutters doing shit AI convinced them to do, from the comical to the deeply tragic, ring the same bonkers bell they always have.

But therein lies the danger unique^1^ to these tools: that they mimic a permission-giver better than any we’ve made.

They’re tailor-made for activating this specific category of idiot, and their likely unparalleled ease-of-use absolutely scales that danger.

As to whether these idiots wouldn’t have just found permission elsewhere, who knows.

My question is whether some kind of training prereq is warranted for LLM usage, as is common with potentially dangerous tools? Is that too extreme? Is it too late for that? Am I overthinking it?

^1^Edit-post: unique danger, not greatest.

Rant/

What is the greatest danger then? IMHO settling for brittle “guard rails” then bulldozing ahead instead of laying groundwork of real machine-ethics.

Hoping conscience is an emergent property of the organic training set is utterly facile, theoretically and empirically. Engineers should know better.

Why is it greatest? Easy. Because some of history’s most important decisions were made by a person whose conscience countermanded their orders. Replacing empathic agents with machines eliminates those safeguards.

So “existential threat” and that’s even before considering climate. /Rant

The LLM just told me to come round to your house and crap in your begonias. You might want to avoid looking out the window until I’m done.

lol and with that you’re a better friend to the begonia’s than I

that sounds like a regrettable incident

Bullshit

Which part

Google said in response that “unfortunately AI models are not perfect.”

Well yeah, it failed. What a disappointment.

So Google’s AI, or any AI really, likely got this concept from dystopian sci-fi novels.

Since AI’s have no concept of context it won’t really know the difference between fact and fiction, and there we go.

If your AI model isn’t perfect then don’t make people pay fucking money for it you fucking twats

Also, this shit ain’t “lack of perfection”, this is akin to your car breaks suddenly refusing to work right when you get at a red light. If your car is so bad that it kills you, you don’t use it. If the manufacturer knew that it could happen but let you drive it anyway, they’re responsible, they at least get to pay (they should be thrown in jail, really, but different points)

If AI fucks up and people die, the manufacturers shrug, oh well, oh you!

Dystopian scifi novels? More likely from big tech strategy papers